Listen to the Episode:

Along the northwestern fringe of Greenland, there is a vast dome of ice, sloping above the rugged crust until it’s taller than the Empire State Building.

Prudhoe Dome, as it’s called, belongs to the Greenland Ice Sheet. Standing atop its windswept surface, peering out at an endless white horizon, it feels timeless, eternal. A world of ice that always was, and always will be.

It’s a dangerous illusion.

Recently, a team of scientists bored down into the ice. They drilled and drilled until they reached the frozen sediment beneath the crushing weight of the dome. Then they dragged the sediment up through the ice, from absolute darkness to the blinding light of the surface.

What they found was unnerving.

The sediments were full of a crystalline mineral called feldspar. When this mineral is shielded from sunlight, natural background radiation – from cosmic rays, for example – occasionally knocks electrons out of their atoms.

Defects in the crystalline structure of feldspar can trap these electrons. But when sunlight hits feldspar, photons – light particles – rip the electrons out of those traps.

Now, if you’re very careful, then in the total darkness of a laboratory, you can tease grains of feldspar out of sediment. You can hit them with infrared light to release their electrons. They glow as they escape, and the brightness of the glow tells you how many electrons were trapped.

The feldspar at the bottom of Prudhoe Dome therefore had an extraordinary story to tell. The more electrons were trapped within the feldspar, the longer it had been since the sediment was exposed to the Sun. And the longer it had been, the older the ice dome must be.

But that’s the thing. There weren’t a lot of electrons.

When exposed to infrared light, the glow of feldspar grains was so faint that it must have been only about 7000 years ago that sunlight reached the sediments beneath Prudhoe Dome.

So, midway through the Holocene, not so very long ago, there seems to have been no ice – no ice at all – in a corner of northwestern Greenland that is now buried by a glacier 1,500 feet thick.

It turns out that vast accumulations of ice can build up, and melt, when the Earth’s average temperature changes by just one or two degrees Celsius.

The feldspar beneath Prudhoe Dome seems to tell us that, not so long ago, Greenland was a very different place than it is today – or than it could be in the not-so-distant future.

It’s a warning we’d be wise to heed, while we still can.

Welcome to the fourteenth episode of The Climate Chronicles, the third in our third season, “Into the Holocene.”

In this episode, we’ll grapple with one of the most important questions in the study of past climates – maybe the most important question.

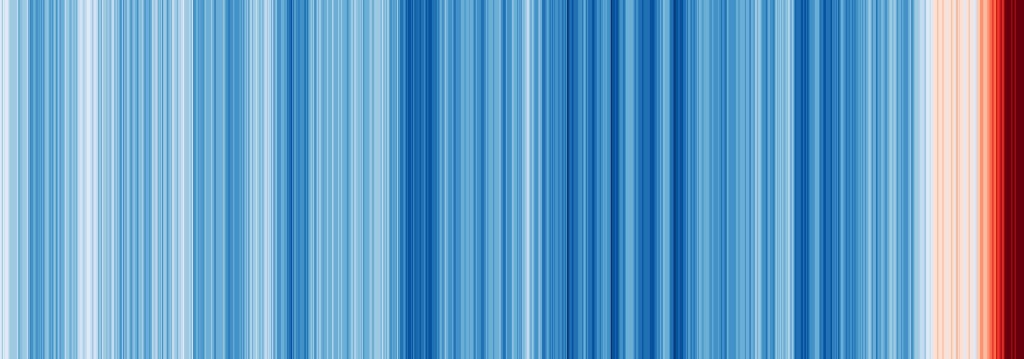

When was the last time that the Earth was as hot as it is today?

It may seem like a simple question, one that should be easy to answer. We’ve seen that there are manynatural archives, and many ways of gaining proxies from those archives, meaning indirect information about past climate changes. It should be easy to find out when Earth was as hot as it is now – right?

Well . . . it’s complicated. But it’s incredibly important that we know.

Think about it. If it’s been, say, millions of years since the Earth was as hot as it is today, then ecosystems around the world never endured modern temperatures. It’s likely that a mass extinction will begin – or, if it’s already started, accelerate – if we don’t lower temperatures quickly.

You can only imagine how much worse that extinction could get if temperatures continue to rise, which they undoubtedly will in the years and decades to come.

But if it’s been thousands of years since the Earth was this hot, not millions, then we might have a little more time, and so a little more hope.

In that case, it’s more likely that we can preserve much of the biosphere by limiting the rise in global temperatures. Yes, it would be better if we stop that rise immediately, and it would be better still if we reverse it. But in this scenario, we’re unlikely to lose our planet – at least, most of it – if we heat it up by, say, another half a degree Celsius.

So, the answer to that simple question – when was the world last this warm? – could mean the difference between a truly dystopian future and a future that is survivable, maybe even better than the present.

Which is all to say that, in this episode, you’ll learn what the history of the early Holocene could tell us about the rest of your life. And you’ll learn why, even when it comes to the history of climate changes that really didn’t happen that long ago, there’s an awful lot we still don’t know for sure.

As industrialization spread in the nineteenth century, governments across the colonial world established what they called geological surveys. The primary purpose of these surveys was to identify economically valuable resources that could support industrial development, and in turn strengthen the state.

You see, industrialization had increased the value of resources that were hidden beneath the Earth – like iron, copper, or coal. It seemed that geologists could unlock those resources for exploitation.

Another goal of the surveys was to map soils and sediments to improve agriculture, permit drainage, or enable the construction of largescale infrastructure. In a way, governments were laying down the foundations of today’s states, and the surveys helped them do that.

The Swedish Geological Survey was founded in 1858. It soon employed many young scientists, and some of them set to work mapping peat deposits.

Peat forms from partially decomposed plants that pile up in bogs. You can think of it as the first stage in the transformation of plants into coal. When peat is cut out of bogs and dried, it can be burned. For heating or industrial fuel, it’s a homegrown alternative to wood or coal.

To map peat deposits, Swedish geologists extracted cores from bogs. They knew that these cores contained ancient vegetation, and that the vegetation had accumulated gradually, in layers. But the knowledge didn’t do them much good. The vegetation had compacted into a mush – the peat – and it was impossible to figure out exactly how one layer was different from another.

Enter the geologist – and peat specialist – Ernst Jakob Lennart von Post. EH-rnst YAH-kob LEN-nart fon Post.

While working for the geological survey, Von Post noticed something extraordinary. Each layer in peat cores was full of microscopic pollen grains.

It was a simple discovery, but absolutely revolutionary. You see, each plant species produces its own, distinctive pollen grains. And of course, every plant sheds those distinctive grains – it’s what gives me terrible allergies every spring here in Washington, DC.

Von Post realized that the mix of pollen grains in each layer of a peat core reveals the kind of plants that once grew in region. If the mix changed, it could only mean that new plant species had moved in, old species had died out – or both.

In 1916, Von Post announced his finding. More than that, he created diagrams that showed how the percentage of different pollen grains changed over time in peat cores. The diagrams for the first time revealed that birch and pine trees dominated Sweden just after the Pleistocene ended, then gave way to spruce and other tree species.

Pollen analysis would become known as palynology, and it would evolve into one of the most powerful techniques for reconstructing not only climate change, but also responses by ecosystems and even human populations to climate change. And when combined with other emerging ways of reconstructing climate – the study of glacial deposits, for example, or fossils – palynology began to suggest that the climate of the early Holocene had been remarkably warm.

But there was a problem. Scientists could only estimate when the vegetation changes revealed by pollen, or the glacial advances and retreats visible in sediments, actually happened. They needed a way to precisely date these changes. Otherwise, they could never be sure that they all happened at the same time, and responded to the same thing – a warming of the Earth, for example – and they could never pinpoint what ultimately set them in motion.

Now, in the early twentieth century, archaeologists had the same issue. They could say that one culture was older than another, but they couldn’t say how old, exactly. Actually, they couldn’t do more than guess the age of anything that belonged to peoples without written records, which turned out to be most of the artifacts and ruins that actually existed.

As archaeologists professionalized their field – meaning, to create institutions, and degrees, and formalized methods, all the things that set apart an academic discipline – they demanded more. Because it was hard for archaeology to be a serious science if it couldn’t answer the most basic questions about the past, like, when did things actually happen?

Strangely, it was the rise of nuclear physics that provided a solution. Right around the same time that Von Post announced his epiphany, physicists discovered isotopes. But this wasn’t immediately useful to archaeologists or paleoscientists. They would have to wait for the huge flows of money and brainpower that went into nuclear research during the Second World War. Now, nuclear physicists learned how to measure radiation more precisely, and some grew comfortable working across academic disciplines.

In 1940, two physicists – Martin Kamen and Sam Ruben – identified the radioactive carbon-14 isotope, and discovered that it formed when cosmic rays collided with nitrogen atoms in our atmosphere. This meant that plants and, in turn, animals had to continually absorb carbon-14 while they were alive.

A Manhattan Project physicist, Willard Libby, realized the implications. After plants and animals died, the carbon-14 isotope would begin to disappear from their remains through radioactive decay. The amount of the isotope left in their remains therefore created a kind of clock that showed when a plant or animal had lived.

Libby now collaborated with archaeologists and, ultimately, worked out the techniques for radiocarbon dating. Finally, scholars of the past – including climate scientists – had a tool that let them date the past with real precision.

When applied to natural archives, radiocarbon dating seemed to clarify what had happened in the early Holocene. After the end of the Pleistocene, it appeared that the Earth warmed up dramatically, as though it couldn’t be happier to be done with those miserable ice sheets. Then, when the euphoria was over, it settled back down into a gradual, multimillennial cooling trend that continued into the present.

Some called the early Holocene warming the “postglacial climatic optimum.” But in 1957, the year Sputnik launched the Space Age, an ecologist named Edward Deevey, who applied radiocarbon dating to lake sediments, and a geologist named Richard Flint, who mapped Pleistocene glaciations, objected to that term. In the finest tradition of finnicky scholars, they proposed their own name for the hottest part of the Holocene: the Postglacial Hypsithermal Interval.

Not surprisingly, it didn’t stick.

Still, Deevey and Flint had reason to be happy – if the early Holocene really was warmer than their present.

As they coined their Hypsithermal Interval, the chemist and climatologist Charles David Keeling designed the first instrument that could precisely measure the concentration of carbon dioxide in the atmosphere. Keeling soon found something astonishing. Every year, carbon dioxide levels were a little higher than they had been.

We now know that human greenhouse gas emissions had already warmed our planet. And if Earth was heating up from a relatively cold place, there was time to avert disaster.

The 1957 launch of Sputnik did more than usher in a new era of space travel. It also transformed the Cold War.

You see, a rocket big enough to lift a satellite into orbit is also powerful enough to send a nuclear bomb halfway around the world – in a matter of minutes.

The Soviet Union had detonated its first atomic bomb just eight years before Sputnik blasted into space. And it had exploded its first hydrogen bomb only two years earlier.

Hydrogen or thermonuclear bombs can be orders of magnitude more powerful than atomic bombs. When the Soviets paired hydrogen bombs with their powerful rockets, a new world was born: a world that was always at most half an hour from annihilation.

As I mentioned in episode 4, in this new world, peace between superpowers seemed to depend on something called Mutually Assured Destruction, or MAD. Never has there been a more appropriate acronym.

Now, in a future episode, we’ll discuss how nuclear war could change Earth’s climate. For now, let’s break down MAD in greater detail than we did before. It’s a doctrine that assumes a superpower won’t launch a nuclear attack on a rival superpower given three conditions:

First, the rival can detect, or at least assign blame for, an incoming attack.

Second, the rival can retaliate with an all-out nuclear assault of its own.

Third, the leaders of the superpower believe that the rival can and will respond with that nuclear assault.

According to the MAD doctrine, if any of these conditions fail, total destruction becomes a very real possibility.

Now, condition number two – having what Cold War planners called a second-strike capability – was probably the hardest one to maintain. The challenge was simply that a nuclear surprise attack, delivered by rocket, would be incredibly fast and destructive. It was possible to imagine that an attack could destroy every nuclear weapon in a rival superpower, or else the entire command structure – the President, say, and all his ministers and military officers – before anyone could give the order to retaliate.

So, systems had to be established that could, within minutes, coordinate the launch of the world’s most powerful machines through space to the other side of the Earth. They had to explode just a few kilometers from cities or military bases or industrial sites. And it wasn’t enough to send just a few rockets. The retaliation would have to be so devastating that it would, effectively, destroy the aggressor. Otherwise, some president or first secretary might just decide that a nuclear attack was worth the cost.

Building the systems responsible for detecting and retaliating to a nuclear strike was a challenge unlike any in human history. There was something insane about it, of course, and there still is. Systems on, under, and above the Earth continue to deter nuclear war between superpowers. And because they’re extremely complex, and ultimately managed by fallible human beings, they’re precarious. We have, repeatedly, come to the brink of nuclear war. We’ve been lucky, but our luck might not hold.

Now, the only fail-safe way of maintaining a second-strike capability was to station missiles where they couldn’t be destroyed in a surprise attack – at least, not easily. In the United States, the Air Force proposed a fantastically expensive scheme to build nuclear missile silos on the Moon. Air Force personnel stationed at a lunar base would detect a Soviet assault on the United States seconds after it was launched, but it would take days for any Soviet missile to reach them. They’d have plenty of time to retaliate.

As you learned in episode 4, a slightly less crazy idea, named Project Iceworm, imagined digging a vast tunnel system within the Greenland Ice Sheet. There, the Air Force could hide hundreds of nuclear missiles. The ice would conceal and protect them in the event of a Soviet first strike. When the attack was over, the missiles would explode out of the ice and devastate the Soviet Union.

Everything depended on the ice itself. You see, ice sheets move. I don’t just mean that they expand or retreat when the Earth cools down or warms up. I mean their ice is literally always on the march.

Yes, it’s at a glacial pace, but that can be fast enough to matter. In general, the ice in ice sheets is always flowing from the interior of an island or a continent to the sea. That’s because the continual accumulation of snow far from the sea eventually creates such a massive heap of ice that gravity begins to flatten the ice and push it outward. It’s not unlike pouring batter into a pan and watching as the batter flattens out into a pancake.

So, Greenland’s ice sheet was clearly a dynamic thing. Its ice moved. But if that ice didn’t move fast, then tunnels hollowed out of the ice would be stable. Project Iceworm could proceed. But if the ice moved too much, then of course any tunnels would be crushed – and so would their nuclear missiles.

And yes – let’s just say that American disrespect for Danish sovereignty, in Greenland, isn’t exactly a new phenomenon.

Now, at the US Army Corps of Engineers, scientists in the so-called Snow, Ice, and Permafrost Research Establishment – rebranded in 1961 as the Cold Regions Research and Engineering Laboratory – were tasked with establishing just how stable the Greenland Ice Sheet really was. In 1959, the Army set up a base on the ice sheet – Camp Century, as you heard in episode 4 – and that’s where its scientists worked to establish whether Iceworm would be feasible.

Army scientists realized that drilling into the ice sheet was the best way to establish how fast it moved. It’s because, remember, ice cores contain layers. Each layer consists of compressed snow and other material – like volcanic ash – that originally fell on the surface of the ice sheet. By studying the thickness or even the angle of these layers, scientists can determine how fast the ice sheet flows, or flowed, from the interior to the sea.

But how do you drill into a wall of ice that’s so cold and so tightly packed together that it might as well be rock?

Well, Army engineers decided to refine existing designs for thermal drills. These drills use electricity to heat up a metal ring that melts ice. Obviously, they seemed perfect for boring deep into the Greenland Ice Sheet.

When a thermal drill was set up at Camp Century, between 1961 and 1963, it sank into the ice until reached about 900 feet beneath the surface.

The electricity for the drill came from the Army’s first field-deployed, portable nuclear reactor. In theory, it could use about 40 pounds of uranium to substitute for thousands, even millions of gallons of diesel fuel. For bases in the world’s most extreme environments, it seemed like a godsend. It even allowed the roughly 200 men at Camp Century to take hot showers whenever they wanted, in one of the coldest environments on Earth.

But at 330 tons fully assembled, the reactor, named the PM-2A, was just a bit less portable than the military suggested. It had to be assembled from pieces flown, one at a time, aboard the largest transport planes in the Air Force.

And reactors like the PM-2A were dangerous – to everything and everyone around them.

Take the PM-3A, the next reactor in the series. Soon after Camp Century went up, Army engineers assembled it on the other side of the world, at a Navy base in McMurdo Sound, Antarctica. But over ten years, the PM-3A suffered no fewer than 438 malfunctions, culminating in a containment failure that spilled radioactive waste across the Antarctic environment. No wonder its nickname: “Nukey-Poo.”

Back in Greenland, the PM-2A didn’t fare much better. It, too, was leaking, and by 1964 it was clear that it had to be dismantled. Over 18 months, a group of soldiers shut it down and disassembled it, piece by piece, in a tunnel some 40 feet beneath the surface of the ice sheet. It was the first time anyone had to tried to take apart a nuclear reactor.

The Commanding Officer, Joseph Franklin, inspected the power plant after his men shut it down. He knew it was dangerous, so he went in alone. It’s a good thing he did. In two minutes, his body absorbed some 60 rads of radiation. It wasn’t a lethal dose, but it may be to blame for the cancers he endured in old age.

Camp Century, in other words, was a radioactive mess that, the Army hoped, would set the stage for an unimaginably bigger and more dangerous radioactive experiment – 4000 kilometers of icy tunnels, jam-packed with nuclear bombs.

But Camp Century is also where scientists learned to extract ice cores, and in the process clarified the peril posed by a changing climate.

They soon found that, at great depths, where the ice was truly ancient, the thermal drill was no longer efficient. Another approach was needed.

Ironically, it was exactly the technology that was partly responsible for the greenhouse gases already changing the atmosphere. In 1964, the cable-suspended electromechanical drill, adopted from the oil industry, would begin to allow scientists to dig down, far beneath 900 feet.

Finally, in July 1966, they hit bedrock, 4,400 feet beneath the ice surface. Now they pulled up the drill, and exhumed a core that, when thoroughly analyzed, told a remarkable story.

First, it was immediately clear that Iceworm was impossible. The ice sheet moved too fast. The tunnels and their bombs would be smashed to pieces.

Now, I don’t know what would have happened if the US military had actually built Iceworm. I can easily imagine how the secret construction of a labyrinth of nuclear missile silos, halfway between the US and the USSR, could have sparked the nuclear war it was meant to deter.

Maybe the world’s first ice core saved Greenland from a nuclear attack that would have sent radioactive meltwater cascading into the Atlantic Ocean.

Second, the core would eventually reveal that some of the most extreme climate changes of the Pleisotocene and early Holocene happened suddenly, in a matter of years, perhaps decades. It was as though the Earth could suddenly flip into entirely new states – as we learned in our first season.

But third, the core appeared to confirm that, indeed, between about 9,000 and 6,000 years ago, the climate of Greenland had been a good deal warmer than it was in the twentieth century.

One of the craziest schemes of the Cold War, a scheme full of nuclear danger, seemed to reveal something that we might now find comforting. The world was not as warm as it could be, when we started heating it up.

But it wouldn’t be long before a very different kind of evidence began to suggest a very different and much less reassuring story.

How could the early Holocene have been warmer than the twentieth century?

In 1976, an answer seemed to come not from ice cores, but from deep sea sediments. Scientists used the oxygen isotopes in these sediments to reconstruct Pleistocene climate changes, and found that the changes precisely matched the Milankovitch Cycles in Earth’s orbit that we mentioned in earlier episodes.

Climatologists soon accepted that these cycles had triggered – or paced – the glacials and interglacials of the Pleistocene. It wasn’t long before a group of scientists argued that the precession cycle – remember, that’s the wobble in the axis of Earth’s rotation – had catalyzed the warming of the early and middle Holocene.

With one crucial caveat.

Think about what causes the seasons. It’s the tilt of the Earth relative to the Sun, right? If the northern hemisphere is tilted towards the Sun, it’s summer in that hemisphere, and winter in the southern hemisphere. If the southern hemisphere is tilted towards the Sun, then it’s summer there, and winter in the north.

I know, this is grade school stuff.

But here’s the thing. The precession cycle alters the point at which the seasons happen in Earth’s orbit around the Sun. And because the orbit isn’t a perfect circle, the precession cycle changes when, in each season, in each hemisphere, Earth is closest to the Sun.

If the precession cycle were to blame for early and mid-Holocene warming, climatologists concluded, then there were different phases in that warming, one for each season.

It was a major breakthrough. Holocene warming was beginning to look less like a worldwide, multimillennial trend, and more like a series of seasonal changes that had different impacts in each hemisphere.

But still, reconstructions of Earth’s climate and orbit both suggested that warming had occurred. The same couldn’t be said for a new kind of evidence that, by the 1980s, had allowed scientists to forecast the future of Earth’s climate – and revealed the danger of increasing greenhouse gas emissions.

This evidence came from climate models – or in other words, computer programs, based on equations, that can simulate Earth’s climate and how it changes.

Now, it’s kind of remarkable that these models came of age exactly as human emissions started to warm our planet. They certainly had a very long history.

I suppose you could start that history with the development of mechanical computers in the ancient world. Around 100 BCE, for example, scholars in Greece developed a little machine that used no fewer than 37 bronze gears to predict astronomical cycles. In 1901, Greek sponge divers found this machine in a shipwreck near the island of Antikythera – hence its name, the “Antikythera Mechanism.”

You could also begin the history of climate modelling almost 2,000 years later, when European merchants and colonizers fanned out across the Earth – and as European empires attempted to consolidate their rule. To explain the environments they encountered, and to imagine links between diverse parts of growing empires, scholars began to identify patterns in what we now call atmospheric and oceanic circulation.

Colonization strengthened capitalism, capitalism encouraged industrialization – and industrialization inspired scientific breakthroughs that, by the early twentieth century, spurred the technological advances that gave rise to aircraft.

Aviation, in turn, allowed scientists to study climate not just horizontally, across the Earth, but also vertically, from sea level to the edge of space. Instruments in aircraft and rockets provided revolutionary new insights into how, exactly, air flowed across our planet.

Around the turn of the twentieth century, some scientists also tried, for the first time, to estimate the balance between the amount of energy that reached Earth from the Sun, versus the amount that left. And some considered how human action could alter that balance.

In 1896, the Swedish physicist and chemist Svante Arrhenius famously calculated that doubling the amount of carbon dioxide in the atmosphere could warm the Earth by about five or six degrees Celsius (as we’ll see, thankfully the real value is a bit lower). And he predicted that industrial activity would slowly begin to increase carbon dioxide concentrations in the atmosphere. It’s often regarded as the first description of human-caused global warming.

We could alternatively start the history of climate modelling with a set of equations, developed in 1904 by the meteorologist Vilhelm Bjerknes to describe atmospheric processes in every part of the world. The equations were far too complex to sort out by hand. But after the Second World War, the mathematician John von Neumann and the meteorologist Jule Gregory Charney used military funding to run the equations on newly capable computers.

Numerical weather predictions, based on the physics of Earth’s climate, now became possible. So did crude computer simulations that accounted for global flows of energy and water to forecast decadal weather trends. These were – you guessed it – the first climate models.

The models were called general circulation models, or GCMs, because they accounted for the flow of air across the Earth. But the giant, room-sized computers of the 1960s were less powerful than your phone, and that limited the spatial resolution with which the models could represent the circulation of the atmosphere.

You see, the models divided the Earth into grids, with each cell in the grid up to 1,000 kilometers across (compare that to around 10 kilometers for today’s cutting-edge models). And even the crudest simulations, with incredibly low spatial resolution, took weeks of computing time to run.

This is why one of the most remarkable realities of climate science is that when some of these first climate models simulated how much the Earth would warm, given a doubling of atmospheric carbon dioxide concentrations, they pretty much got it right.

In 1979, a famous report by the National Academy of Sciences used crude GCMs to conclude that the world would warm by 1.5 to 4.5 degrees Celsius if the concentration of carbon dioxide doubled in the atmosphere.

Scientists have only recently refined this value for climate sensitivity, as it’s called, to between 2.5 and 4 degrees Celsius.

Now, when people talk about climate models, they usually think about the future. After all, climate models have the unique ability to simulate how Earth’s climate could change in response to the greenhouse gases we’ve already pumped into the atmosphere, and to the gases we could send there in the decades and centuries to come.

But climate models also help us understand the present. They help us interpret the truly vast flows of climate information provided by worldwide networks of weather instruments, like thermometers, not to mention Earth-observing satellites.

And climate models can hindcast. You don’t have to start their simulations in the present. You can, alternatively, start them hundreds, thousands, or even millions of years ago. Beginning with a reconstruction of Earth’s environment in the distant past – brought to us by the proxies in natural archives – models can run forward through time.

Now, as we’ve seen, the climate reconstructions we create using natural or even societal archives are always imperfect. Maybe they don’t tell us what happens in every season, or what happens across the whole Earth. Maybe they can only indirectly reveal changes in the flow of air or water – in atmospheric or oceanic circulation, in other words. Maybe their resolution is really low, meaning they can hint at what happened from century to century, but not from year to year, let alone month to month or day to day. Unfortunately, even reconstructions created using many proxies in many archives are far from perfect.

That’s the value of model hindcasts. They can help us get a sense of the information that’s missing from reconstructions.

Of course, there’s a bit of a chicken and egg problem here, because the model hindcasts are only as good as the information we have about past environments, but the hindcasts are supposed to fill in the information we don’t have. But you now know that there’s no such thing as a perfect method for peering into the deep past.

Now, by the late 1980s, scientists began to use models to hindcast the climate of the Holocene. But what they found was perplexing. Rather than simulating early Holocene warmth, followed by a multimillennial cooling trend, the models suggested that the early Holocene had been colder than the present, and that the world had actually been warming for thousands of years.

By 2014, scientists had a term for the apparent mismatch between what natural archives seemed to reveal about the early and mid-Holocene climate, and what models simulated. They called it the Holocene Temperature Conundrum.

And the implications were profound. Because if the models were right, then the Earth could already be hotter than it has been for millions of years. Ecosystems may already face temperatures far beyond any they’ve endured before. In that case, a climate disaster of unimaginable proportions could already be underway.

The Holocene Temperature Conundrum is still with us today. And it’s one of the most humbling things in climate science.

Think about it.

We don’t even know for sure whether the Earth was hotter halfway through the Holocene, about 6,000 years ago, than it was midway through the twentieth century, or even than it is today.

Consider all the work – over decades, centuries, by thousands of scholars, all over the world – to dredge up microscopic pollen from peat bogs, or to drill to the bedrock beneath mountainous glaciers, or to invent machines that peer into the future. All that labor, at all that cost, and we still don’t know exactly what happened over the past 6,000 years, or in other words just the most recent 2% of human history!

How much can we really say about climate change over the other 294,000 years of our past, give or take – let alone the Pleistocene as a whole, or the even more distant past?

It’s worth some reflection. There’s a lot we know – or think we know – about the history of climate change. But we need to admit that our understanding of both the past and the future is far from complete.

Now, I do think that we can safely conclude that some parts of the world were indeed at least as warm in the early or middle Holocene as they were in the twentieth century. Parts of Greenland, for example. It’s just hard to argue with clear evidence for a vanished ice sheet.

I also think that we can say that, between about 10,000 and 6,000 years ago, Northern Hemisphere summers were probably about as hot as they have been, on average, in the first two decades of the twenty-first century.

What’s still not clear is whether global temperatures were as high as they are now – or at least, as they were recently.

You see, our climate reconstructions are often biased towards summer temperatures on land.

Plants, for example, grow and reproduce in a growing season, which means summer across much of the world. The distribution of plants in an environment tends to reflect temperatures and precipitation in the summer. So, pollen fossils in peat bogs tell us what summer conditions were like – but not necessarily winter.

Other natural archives have similar biases. Even ones you might not expect. Lakebed sediments, for instance. We often use microscopic plant and animal remains in these sediments as proxies for climate change. But again, plants and animals tend to thrive in the summer. And because lakes are, by definition, removed from the sea, lakebed deposits are biased towards conditions on land.

It may be telling that when climate models are asked to simulate summer temperatures in the northern hemisphere, they show profound warming in the early and middle Holocene. But when they’re asked to simulate global temperatures, they show exactly the opposite. Now the world seems cold in the early Holocene, and it warms – gradually – ever since.

More evidence comes from reinterpretations of sea surface temperature reconstructions. These reconstructions were created using the microscopic remains of plankton in ocean sediments. But it turns out that they, too, were biased towards summer temperatures. When scientists attempted to correct for this bias, they found that sea surface temperatures may have been similar to what climate models simulated.

So, that could be a way out of the Holocene Temperature Conundrum. Maybe our proxies showed us what happened in the summer, especially on land, while our models told us what happened globally. It would be a tidy solution. But new research may indicate that reality isn’t so simple.

You see, it’s not impossible to reconstruct winter temperatures using proxies in natural archives.

For example, every winter, cold, dry winds blow from the interior of Asia toward the Pacific Ocean. This is called the East Asian Winter Monsoon. It may seem like a strange name, because the word monsoon is so strongly associated with rainfall. But a monsoon is really just a seasonal change in wind direction, caused by differences in heating between land and ocean.

The point is that this East Asian Winter Monsoon is stronger when winters are cold, and that it’s possible to use many different proxies – like deposits of windblown dust – to reconstruct its strength. Reconstructions using these proxies suggest that the monsoon was in fact weaker midway through the Holocene, meaning that winters in at least part of Asia were actually warm then.

So, maybe the models are missing something. Maybe there was less dust in the atmosphere during the middle of the Holocene, for example, than there was later. That could have warmed the Earth. Cores extracted from the bottom of the ocean suggest as much. Climate model hindcasts tend to have difficulty accounting for dust in the atmosphere, partly because it’s so hard to establish how much there was or where it came from.

We’re left with an enduring mystery. And really, that’s a wonderful thing. A mystery inspires new research, new debate, and progress in creating better climate reconstructions or model simulations.

For scholars, there’s really nothing better than a mystery.

But in my view, the Holocene Temperature Conundrum won’t be around much longer. There are too many potential ways forward. The science is developing too quickly. I suspect that we’ll soon have a much clearer understanding of just how much danger we’re in. Of just how unusual today’s temperatures really are in Holocene history.

The science that tells us about climate change emerged, in part, from the very forces changing our climate.

Many of the scientists who, for the first time, found evidence for past climate change, or developed methods to interpret that evidence, worked for colonial governments, world-dominating militaries, or oil companies.

The technologies climate scientists invented or perfected would not have existed without those governments, or militaries, or companies; the motives and the funding necessary to deploy those technologies would have been unimaginable.

In a way, those responsible for global warming inadvertently created the tools necessary to detect how they were changing the Earth. And, perhaps, to stop it.

Greenland’s history tells us that we’d better hurry.

There’s still time to limit global warming to 2 °C, relative to the world’s average temperature in the late nineteenth century. If we manage that, and if we then drain carbon dioxide from the atmosphere, it may be possible to preserve Greenland’s ice sheet.

But what if the world warms until it’s three degrees hotter than that late nineteenth-century average? Climate models indicate that that’s where we’re headed by the end of this century.

In that case, the sediments below Greenland’s ice sheet, and the layers within the ice itself, warn of a bleak future. Melting at the fringes of the ice sheet – at Prudhoe Dome and all along the coast – would, in time, reach the interior. Sea levels would soar until every coastal city drowns.

Much would be lost – but it would take a long time. With great suffering and hardship, maybe we could adapt.

Now, what if we double down on fossil fuels? What if we stop regulating greenhouse gas emissions? What if we turn our backs on the green economy?

The Earth could warm by over four degrees Celsius by the end of this century – with much more warming to come. The Greenland ice sheet would take time to melt. There’s just so much ice. But the melting would come faster and faster.

Eventually, sea levels could rise too quickly. Too fast for people to handle; for ecosystems to cope. In a world of runaway warming, economies could collapse. Wars could spread. Billions could die.

The sediments beneath Prudhoe Dome warn us that it’s possible. But they also suggest we can still save the Greenland Ice Sheet.

We can cancel the Apocalypse.

For Teachers and Students

Review Questions:

- What is palynology? What can it reveal about past environments?

- What are climate models? How can they tell us about past, present, and future climates?

- What is the Holocene Temperature Conundrum, and what are its implications?

- In what ways has “the science that tells us about climate change emerged, in part, from the very forces changing our climate?” Feel free to consider other episodes.

Key Publications:

Cartapanis, Olivier et al., “Complex spatio-temporal structure of the Holocene Thermal Maximum.” Nature Communications 13:1 (2022): 5662.

Essell, Helen et al., “Rethinking the Holocene temperature conundrum.” Climate Research 92 (2024): 61-64.

Jiang, Shiwei et al., “Enhanced global dust counteracted greenhouse warming during the mid-to late-Holocene.” Earth-Science Reviews 258 (2024): 104937.

Kaufman, Darrell S. and Ellie Broadman, “Revisiting the Holocene global temperature conundrum.” Nature 614:7948 (2023): 425-435.

Martin, Kaden C. et al., “Greenland Ice cores reveal a south‐to‐north difference in Holocene thermal maximum timings.” Geophysical Research Letters 51:24 (2024): e2024GL111405.

Thompson, Alexander J. et al., “Northern Hemisphere vegetation change drives a Holocene thermal maximum.” Science Advances 8:15 (2022): eabj6535.

Walcott-George, Caleb K. et al., “Deglaciation of the Prudhoe Dome in northwestern Greenland in response to Holocene warming.” Nature Geoscience (2026): 1-6.

Video and Audio Credits:

Audio: AIVA, Podbean, LALIA.

Video: Runway.

Funding provided by Georgetown University’s Earth Commons.

Leave a Reply